Agents vs Workflows: A Decision Framework for Engineers (Use Cases, Failure Modes, Escalation Paths)

— A decision framework for when to use agents vs deterministic workflows, with failure modes and escalation paths for production systems.

TL;DR

A decision framework for when to use agents vs deterministic workflows, with failure modes and escalation paths for production systems.

Agent discussions often collapse into hype or blanket skepticism. Engineers need a decision framework that starts from task structure, failure cost, and operational constraints.

This topic connects directly to your existing foundations and reliability framing, especially How LLMs Actually Work, Why Models Hallucinate, and Why AI Demos Scale Poorly Into Real Systems.

Why This Matters in Production

Most AI failures in production are not caused by one bad model response. They come from system design choices: unclear requirements, weak evaluation, poor observability, missing guardrails, and rollout practices that assume demos predict real usage.

A useful engineering article on this topic should help teams make better decisions under constraints. That means defining scope, measuring outcomes, and making trade-offs explicit instead of relying on intuition alone.

When To Use This Approach

- You are deciding between a deterministic workflow and an agentic loop.

- A team proposes an “agent” and you need to evaluate whether the complexity is justified.

- You want to ship tool-using systems safely without over-automating risky decisions.

When Not To Use It (Yet)

- You assume agents are automatically better for any multi-step task.

- You cannot define acceptable failure modes or escalation paths.

- You lack validation and observability for tool calls and state transitions.

Common Failure Modes

1. Agent chosen for a fixed process

A deterministic workflow would be cheaper, faster, and easier to test.

2. No state or action constraints

The system can loop, repeat actions, or explore irrelevant tool paths.

3. No escalation path

When uncertainty rises, the system keeps acting instead of asking for input or handing off.

4. Evaluation focuses on demos

Success is measured on curated scenarios rather than repeatability, cost, and incident rate.

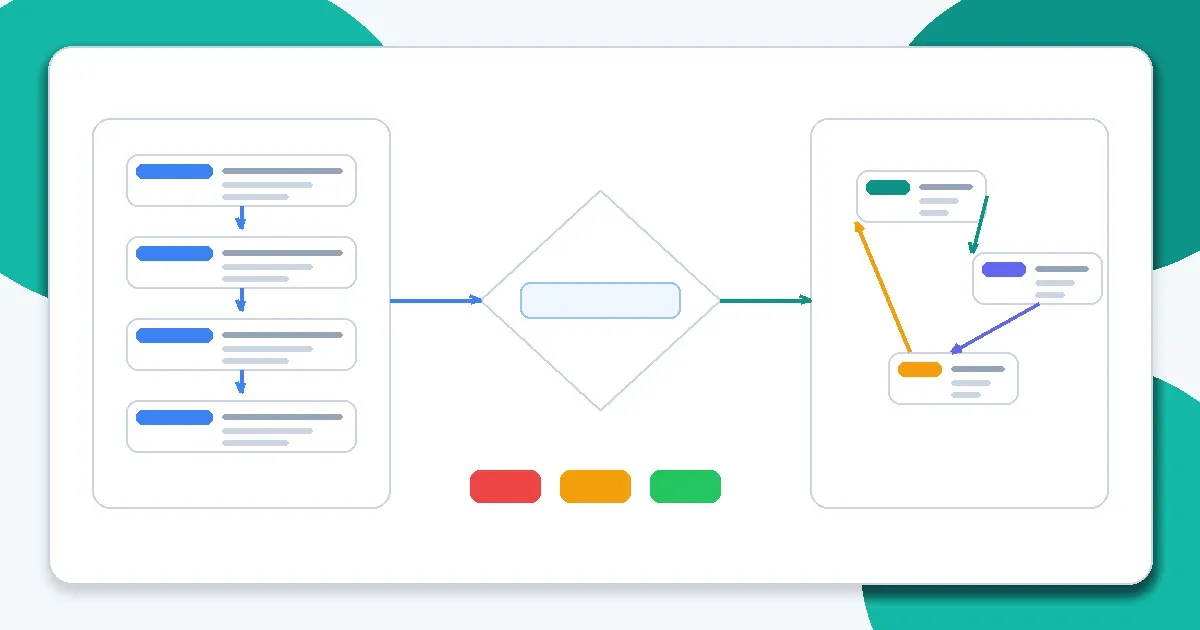

Implementation Workflow

Step 1: Describe the task as a process

List inputs, decisions, tools, and outputs. Identify which steps are deterministic vs ambiguous.

Step 2: Score ambiguity and failure cost

High ambiguity may favor agentic planning; high failure cost favors deterministic control and approvals.

Step 3: Choose the minimum autonomy needed

Start with workflow + LLM decision points before full autonomous loops.

Step 4: Define action constraints

Schema validation, allow-lists, budget caps, and step limits reduce operational risk.

Step 5: Design escalation paths

Specify when the system asks the user, falls back, or hands off to a human/operator.

Step 6: Evaluate and monitor in production

Measure task success, tool-call correctness, loop frequency, and cost/latency under real workloads.

Metrics, Checks, and Guardrails

Checks

- The chosen architecture matches task ambiguity and failure cost.

- Tool calls are constrained and validated.

- Loop/step budgets and timeout rules exist.

- Escalation paths are explicit and testable.

Metrics

- Task success by task class - Helps identify where autonomy adds value and where it harms reliability.

- Tool-call accuracy and retry loops - Key signals for agent stability and constraint quality.

- Escalation rate - Shows whether the system is appropriately handing off or over-automating.

- Cost and latency per completed task - Agentic flexibility can be expensive; track economics directly.

Production Trade-offs

- Autonomy vs predictability - More autonomy can handle ambiguity but reduces testability and determinism.

- Generality vs operational simplicity - A flexible agent can cover many scenarios; targeted workflows are easier to maintain and debug.

- User convenience vs risk controls - Approval prompts and guardrails reduce risk but add friction.

Example Scenario

A team planned an agent to triage support tickets, fetch docs, and reply automatically. A workflow with LLM classification plus retrieval and human approval achieved the goal with lower risk and easier monitoring.

How This Fits Your Existing Content Graph

Use this post to bridge your current strengths in prompting and RAG to newer paths such as evaluation, LLMOps, security, and cost/performance. In practice, readers should move between Prompt Structure Patterns for Production, Output Control with JSON and Schemas, Retrieval Is the Hard Part, and Evaluating RAG Quality depending on where the failure occurs.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- Prompting Is Not Magic: What Really Changes the Output

- Output Control with JSON and Schemas

- Debugging Bad Prompts Systematically

- Choosing the Right Model for the Job

- The Week the Chatbot Died: Inside the $1.25T Leap into Agentic Space

- Why AI Demos Scale Poorly Into Real Systems