Production Rollouts for AI Features (Shadow Mode, Canary, Guardrails, Rollback)

— A rollout strategy for AI features using shadow mode, canaries, guardrails, and rollback plans to reduce production risk.

TL;DR

A rollout strategy for AI features using shadow mode, canaries, guardrails, and rollback plans to reduce production risk.

AI features change behavior as prompts, models, and upstream data change. Rollout strategy matters more than one-time QA because regressions can appear after deployment under real traffic and real edge cases.

This topic connects directly to your existing foundations and reliability framing, especially How LLMs Actually Work, Why Models Hallucinate, and Why AI Demos Scale Poorly Into Real Systems.

Why This Matters in Production

Most AI failures in production are not caused by one bad model response. They come from system design choices: unclear requirements, weak evaluation, poor observability, missing guardrails, and rollout practices that assume demos predict real usage.

A useful engineering article on this topic should help teams make better decisions under constraints. That means defining scope, measuring outcomes, and making trade-offs explicit instead of relying on intuition alone.

When To Use This Approach

- Launching a new AI feature or materially changing model/prompt/retrieval behavior.

- Replacing a baseline heuristic or rules system with AI-driven logic.

- Operating in a domain where bad outputs create trust, compliance, or cost risk.

When Not To Use It (Yet)

- You do not yet have monitoring or rollback controls.

- You plan to deploy directly to all users because the demo worked.

- You cannot isolate AI decisions from irreversible downstream actions.

Common Failure Modes

1. Canary without guardrails

Traffic is limited, but the system can still produce unsafe or expensive behavior because no validator or fallback is in place.

2. Shadow mode without evaluation

Teams collect shadow outputs but never compare them against baseline decisions or production outcomes.

3. Rollback is theoretical

A rollback plan exists in docs but not in feature flags, deployment config, or operational ownership.

4. Rollout metrics ignore user impact

Token or latency metrics are tracked, but task completion, escalation rate, or error burden are missing.

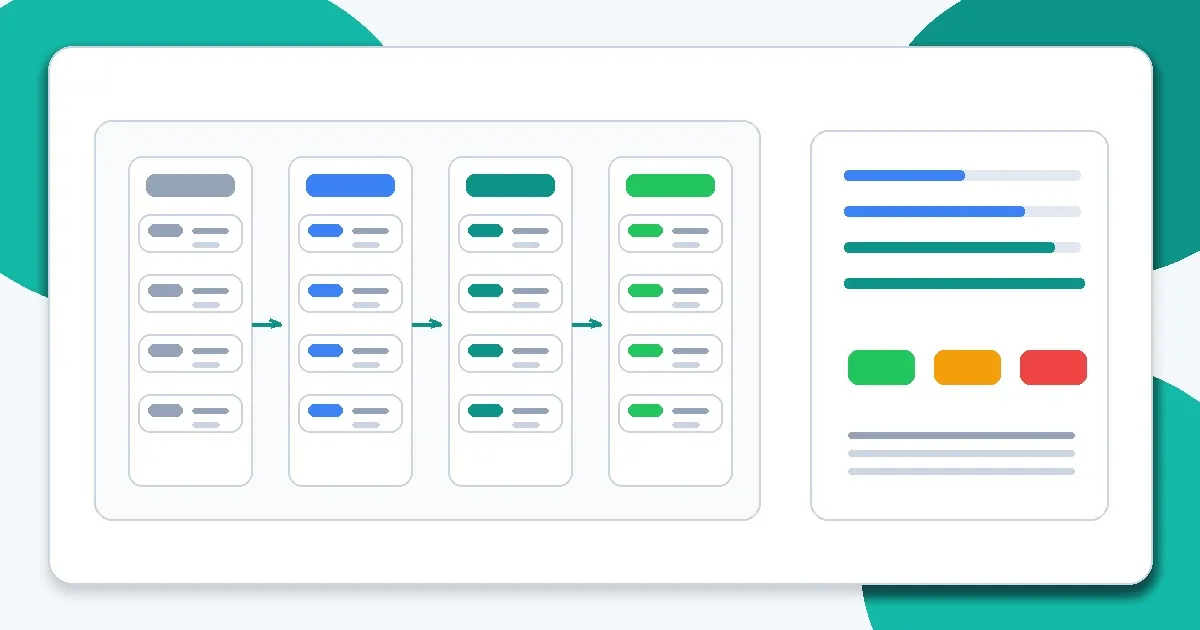

Implementation Workflow

Step 1: Define the deployment unit

Specify whether the rollout is a prompt change, model change, retrieval change, or full pipeline change.

Step 2: Establish a baseline

Capture current performance, cost, reliability, and error rates before changing traffic.

Step 3: Start in shadow mode

Run the new system on mirrored traffic and compare outcomes without affecting users.

Step 4: Move to a gated canary

Expose limited traffic behind validators, fallbacks, and kill switches.

Step 5: Expand by segment

Increase coverage by user tier, geography, or use case while watching key metrics and failure buckets.

Step 6: Document decision and rollback criteria

Predefine what metrics or incidents trigger pause, rollback, or continued expansion.

Metrics, Checks, and Guardrails

Checks

- Feature flag / traffic split exists and is tested.

- Fallback behavior is defined and monitored.

- Rollback criteria are explicit and owned.

- Deployment metrics include user outcomes and operational constraints.

Metrics

- Task success delta vs baseline - Primary signal for whether the rollout improves the product.

- Escalation/refusal/fallback rates - Detects hidden degradation even when completions remain fluent.

- Latency and cost deltas - Canary success should include operational viability.

- Safety and validation failures - Critical for automated or high-trust workflows.

Production Trade-offs

- Rollout speed vs blast radius - Fast ramps reduce coordination overhead but increase incident impact if the system regresses.

- Strict guardrails vs user coverage - Tighter guardrails reduce risk but may cause over-refusal or increased fallback rates during early rollout.

- Shadow fidelity vs engineering cost - High-fidelity shadowing is valuable but may require substantial integration work.

Example Scenario

A support reply assistant looked fine in QA but increased escalation workload for premium users. Segment-based canary metrics revealed the issue before full rollout.

How This Fits Your Existing Content Graph

Use this post to bridge your current strengths in prompting and RAG to newer paths such as evaluation, LLMOps, security, and cost/performance. In practice, readers should move between Prompt Structure Patterns for Production, Output Control with JSON and Schemas, Retrieval Is the Hard Part, and Evaluating RAG Quality depending on where the failure occurs.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- Choosing the Right Model for the Job

- Output Control with JSON and Schemas

- Debugging Bad Prompts Systematically

- Why AI Demos Scale Poorly Into Real Systems

- Why Every Smarter Model Also Increases System Risk

- Why JSON Output Alone Does Not Make AI Safe

Read Next

Continue learning

Next in this path

Prompt Injection and Retrieval Poisoning: Practical Defenses for Production Systems

Practical defenses against prompt injection and retrieval poisoning, with engineering patterns for containment, validation, and incident response.

Intentional links