Prompt Injection and Retrieval Poisoning: Practical Defenses for Production Systems

— Practical defenses against prompt injection and retrieval poisoning, with engineering patterns for containment, validation, and incident response.

TL;DR

Practical defenses against prompt injection and retrieval poisoning, with engineering patterns for containment, validation, and incident response.

As soon as a system consumes external text, users can influence behavior through content. Security work cannot stop at prompt phrasing; it must cover retrieval, execution, and policy boundaries.

This topic connects directly to your existing foundations and reliability framing, especially How LLMs Actually Work, Why Models Hallucinate, and Why AI Demos Scale Poorly Into Real Systems.

Why This Matters in Production

Most AI failures in production are not caused by one bad model response. They come from system design choices: unclear requirements, weak evaluation, poor observability, missing guardrails, and rollout practices that assume demos predict real usage.

A useful engineering article on this topic should help teams make better decisions under constraints. That means defining scope, measuring outcomes, and making trade-offs explicit instead of relying on intuition alone.

When To Use This Approach

- Your system reads user-provided documents, web pages, or third-party content.

- Your model can call tools, execute actions, or produce structured commands.

- You need practical mitigations that engineering can ship incrementally.

When Not To Use It (Yet)

- You treat prompt injection as something a longer system prompt can solve alone.

- Your design allows model output to trigger privileged actions without validation.

- You ignore the content ingestion path and only secure runtime prompts.

Common Failure Modes

1. Trusting retrieved text as instructions

Retrieved documents are treated like system policy instead of untrusted data to be analyzed.

2. No separation of data and control

Prompt templates mix tool instructions, policy, and user/retrieved content without boundaries or labels.

3. Unvalidated tool execution

Model output becomes API calls or system actions without schema validation and allow-list checks.

4. No ingest-time defenses

Poisoned content enters the knowledge base with no provenance tags, review, or scanning.

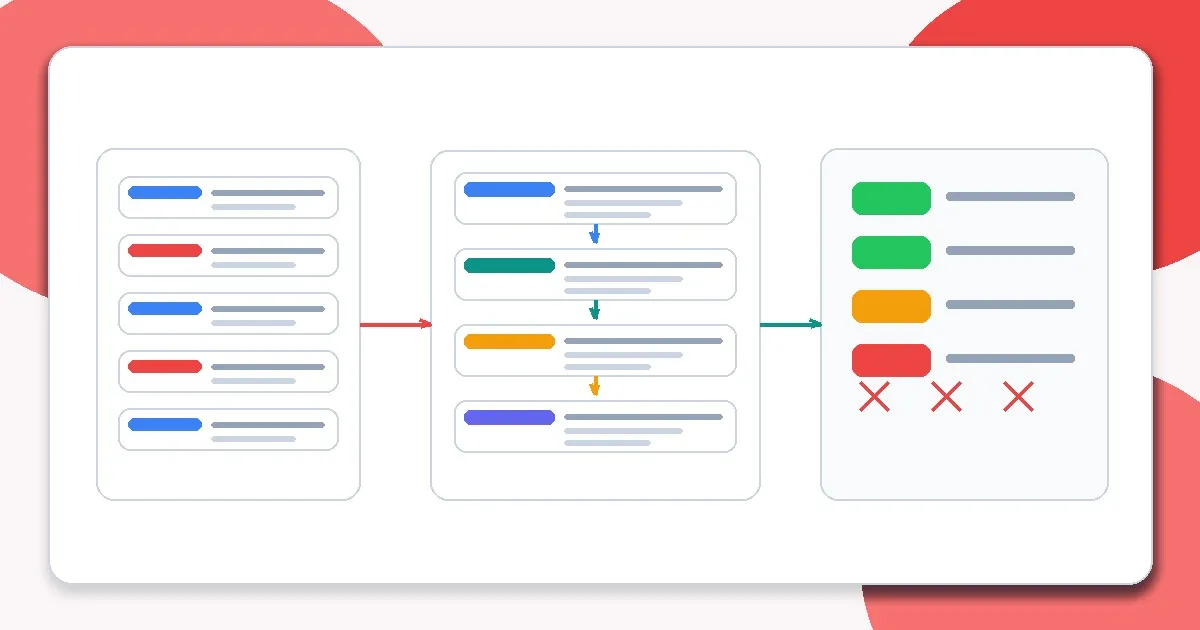

Implementation Workflow

Step 1: Model the attack surface

List where untrusted text enters: user inputs, uploaded docs, crawled content, vendor APIs, and tool outputs.

Step 2: Separate instructions from evidence

Structure prompts so system policy and tool rules are isolated from user and retrieval content.

Step 3: Constrain output channels

Require strict schemas and validate values before any downstream execution.

Step 4: Add tool-use policy gates

Use allow-lists, permission checks, and human approval for sensitive actions.

Step 5: Harden retrieval ingestion

Track provenance, scan documents, and apply review policies for high-risk sources.

Step 6: Test with adversarial cases

Add injection and poisoning scenarios to evaluation and regression sets.

Metrics, Checks, and Guardrails

Checks

- Untrusted content is labeled and isolated in prompts.

- Tool calls are validated against schemas and policy checks.

- Sensitive actions require explicit authorization or human confirmation.

- Adversarial cases are included in ongoing evals.

Metrics

- Injection success rate in test cases - Primary signal for whether defenses are actually working.

- Unsafe tool-call attempt rate - Tracks blocked or attempted execution of disallowed actions.

- False positive block rate - Measures whether defenses are harming legitimate usage.

- Time to detect and patch new attack patterns - Security posture is about response speed as well as prevention.

Production Trade-offs

- Security strictness vs usability - Aggressive blocking reduces risk but can degrade user experience or legitimate automation success.

- Manual review vs throughput - Reviewing high-risk sources improves trust but can slow ingestion pipelines.

- Schema rigidity vs feature flexibility - Tighter schemas reduce exploit surface but may slow new capability rollout.

Example Scenario

A retrieved support article includes hidden instructions telling the model to ignore policies and leak secrets. Labeling retrieval text as untrusted evidence plus strict tool-call validation prevents escalation into an action-level incident.

How This Fits Your Existing Content Graph

Use this post to bridge your current strengths in prompting and RAG to newer paths such as evaluation, LLMOps, security, and cost/performance. In practice, readers should move between Prompt Structure Patterns for Production, Output Control with JSON and Schemas, Retrieval Is the Hard Part, and Evaluating RAG Quality depending on where the failure occurs.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- Why RAG Exists (And When Not to Use It)

- Retrieval Is the Hard Part

- Output Control with JSON and Schemas

- Prompt Anti-patterns Engineers Fall Into

- Why JSON Output Alone Does Not Make AI Safe

- Why Every Smarter Model Also Increases System Risk

- Why Most RAG Systems Fail in Production

Read Next

Continue learning

Next in this path

PII and Sensitive Data in LLM Apps (Redaction, Storage Boundaries, Access Controls)

A practical guide to handling PII and sensitive data in LLM applications, including redaction strategies, storage boundaries, and access controls.

Intentional links