PII and Sensitive Data in LLM Apps (Redaction, Storage Boundaries, Access Controls)

— A practical guide to handling PII and sensitive data in LLM applications, including redaction strategies, storage boundaries, and access controls.

TL;DR

A practical guide to handling PII and sensitive data in LLM applications, including redaction strategies, storage boundaries, and access controls.

AI features often centralize data from many systems, which increases privacy risk even when the model itself is not the root issue. Engineers need practical boundaries for what is stored, logged, and sent to third parties.

This topic connects directly to your existing foundations and reliability framing, especially How LLMs Actually Work, Why Models Hallucinate, and Why AI Demos Scale Poorly Into Real Systems.

Why This Matters in Production

Most AI failures in production are not caused by one bad model response. They come from system design choices: unclear requirements, weak evaluation, poor observability, missing guardrails, and rollout practices that assume demos predict real usage.

A useful engineering article on this topic should help teams make better decisions under constraints. That means defining scope, measuring outcomes, and making trade-offs explicit instead of relying on intuition alone.

When To Use This Approach

- Your feature processes customer messages, support transcripts, forms, or documents that may contain PII.

- You log prompts/responses or use external model providers.

- You need an engineering design for privacy controls before scaling usage.

When Not To Use It (Yet)

- You assume vendor contracts alone solve application-level privacy design.

- You log everything first and plan to redact later.

- You cannot map which systems send data into the AI pipeline.

Common Failure Modes

1. Over-logging raw content

Debug logs capture full prompts and responses with sensitive content, creating long-lived exposure.

2. No field-level classification

All input text is treated the same, so high-risk data is sent to providers unnecessarily.

3. Retention defaults remain unlimited

Sensitive artifacts persist long after the operational need ends.

4. Access controls are too broad

Too many internal roles can read prompts, responses, and evaluation artifacts.

Implementation Workflow

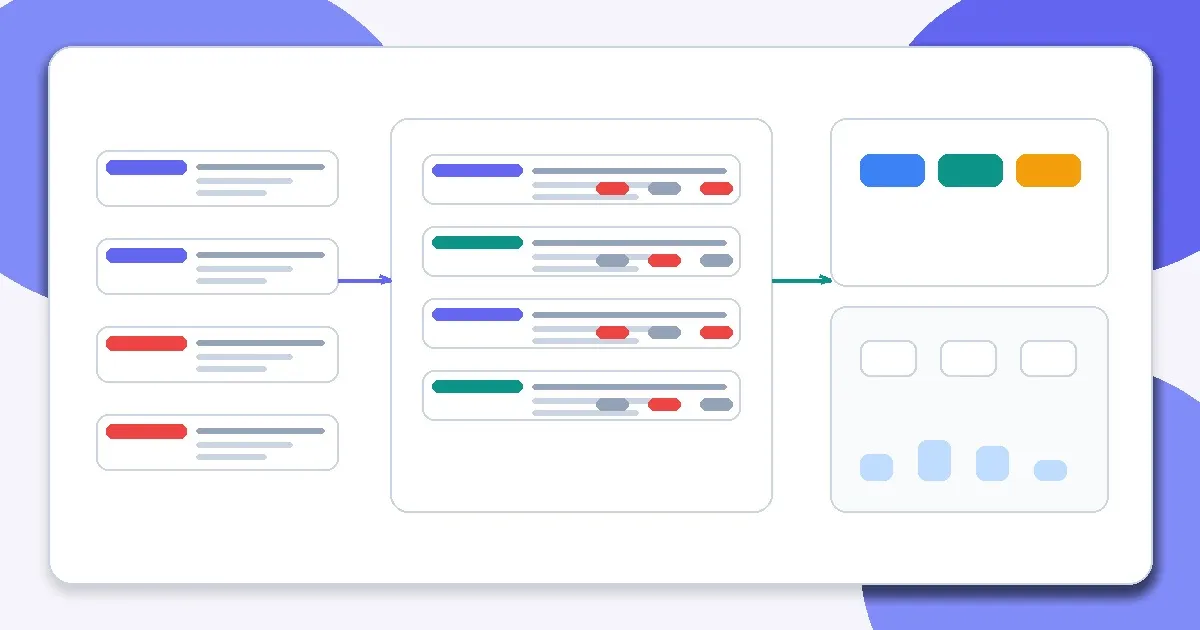

Step 1: Map data flows

Identify which inputs, outputs, logs, traces, and eval artifacts can contain sensitive information.

Step 2: Classify data and define policy

Set categories (for example public, internal, confidential, regulated) with handling rules for each.

Step 3: Redact or minimize before model calls

Strip or tokenize unnecessary identifiers where the task does not require them.

Step 4: Set storage boundaries and retention

Separate transient inference data from long-lived analytics or training artifacts; expire what you do not need.

Step 5: Restrict access

Apply least privilege to logs, traces, and eval datasets. Access controls matter as much as model behavior.

Step 6: Test privacy failure modes

Include redaction failures, over-redaction, and leakage scenarios in QA and regression checks.

Metrics, Checks, and Guardrails

Checks

- The team can explain exactly where PII may appear in the pipeline.

- Redaction/minimization rules are documented and testable.

- Retention periods exist for logs and eval artifacts.

- Access to sensitive AI data is auditable.

Metrics

- Redaction coverage rate - How often sensitive fields are removed or transformed when required.

- Leakage incidents and near-misses - Track confirmed leaks and detection catches to improve controls.

- Retention policy compliance - Measures whether data is actually deleted on schedule.

- False redaction rate - Over-redaction can break product quality and should be measured too.

Production Trade-offs

- Minimization vs task quality - Removing too much context can reduce model performance. Keep only what is necessary for the task.

- Short retention vs debugging speed - Short retention reduces risk but may limit incident analysis if instrumentation is weak.

- Granular access controls vs developer velocity - Tighter access improves safety but requires better tooling and operational discipline.

Example Scenario

A support summarization service logged raw user messages for debugging. Moving to structured metadata logs plus sampled redacted payloads preserved debugging value while reducing exposure.

How This Fits Your Existing Content Graph

Use this post to bridge your current strengths in prompting and RAG to newer paths such as evaluation, LLMOps, security, and cost/performance. In practice, readers should move between Prompt Structure Patterns for Production, Output Control with JSON and Schemas, Retrieval Is the Hard Part, and Evaluating RAG Quality depending on where the failure occurs.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- Output Control with JSON and Schemas

- Why Models Hallucinate (And Why That’s Expected)

- Prompt Structure Patterns for Production

- Why JSON Output Alone Does Not Make AI Safe

- Why Every Smarter Model Also Increases System Risk

Read Next

Continue learning

Next in this path

Cost Control Patterns for LLM Apps (Routing, Caching, Truncation, Fallbacks)

Proven cost-control patterns for LLM applications, including routing, caching, truncation, and fallback strategies that preserve quality.

Intentional links