Cost Control Patterns for LLM Apps (Routing, Caching, Truncation, Fallbacks)

— Proven cost-control patterns for LLM applications, including routing, caching, truncation, and fallback strategies that preserve quality.

TL;DR

Proven cost-control patterns for LLM applications, including routing, caching, truncation, and fallback strategies that preserve quality.

Cost problems appear after adoption, not during demos. Engineers need cost control patterns that preserve user outcomes instead of bluntly cutting model quality.

This topic connects directly to your existing foundations and reliability framing, especially How LLMs Actually Work, Why Models Hallucinate, and Why AI Demos Scale Poorly Into Real Systems.

Why This Matters in Production

Most AI failures in production are not caused by one bad model response. They come from system design choices: unclear requirements, weak evaluation, poor observability, missing guardrails, and rollout practices that assume demos predict real usage.

A useful engineering article on this topic should help teams make better decisions under constraints. That means defining scope, measuring outcomes, and making trade-offs explicit instead of relying on intuition alone.

When To Use This Approach

- Your request volume is increasing and unit economics matter.

- You are using multiple models or complex RAG/tool pipelines.

- You need predictable cost controls before broad rollout.

When Not To Use It (Yet)

- You optimize token cost before proving product value or correctness.

- You treat cost control as only a model-switch decision.

- You reduce context or safety checks without measuring quality impact.

Common Failure Modes

1. One-size-fits-all model usage

Simple requests pay premium model cost because no routing exists.

2. No caching strategy

Repeated prompts, retrieval results, or deterministic outputs are recomputed every time.

3. Context bloat

Teams keep adding instructions and retrieved text without tracking marginal quality gain.

4. Fallback storms

Retries and fallback chains trigger unexpectedly and multiply cost during incidents.

Implementation Workflow

Step 1: Measure cost at request-path level

Break cost down by model calls, retrieval steps, tools, and retries.

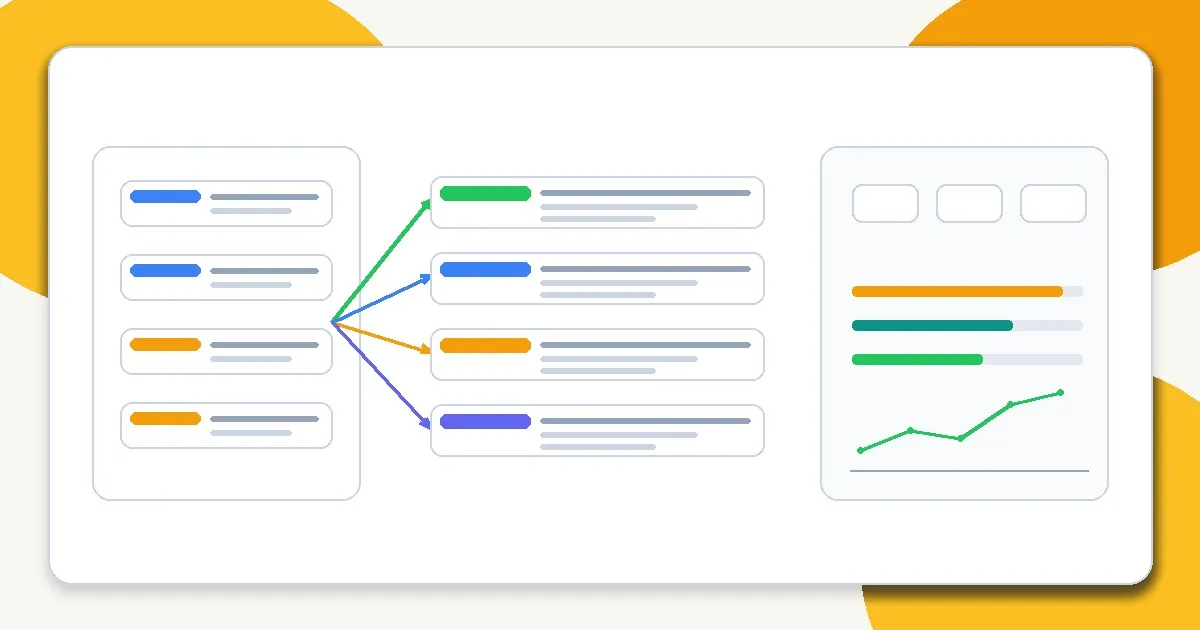

Step 2: Introduce routing

Send simple tasks to cheaper paths and reserve expensive models for ambiguous or high-risk cases.

Step 3: Apply caching deliberately

Cache deterministic outputs, retrieval candidates, or intermediate transforms where staleness risk is manageable.

Step 4: Reduce unnecessary tokens

Tighten prompts, cap retrieval fan-out, and enforce output length budgets based on task needs.

Step 5: Design safe fallbacks

Limit retry chains and make fallback behavior explicit so incident traffic does not explode spend.

Step 6: Monitor cost with quality metrics

Every cost optimization should be paired with task success and reliability checks.

Metrics, Checks, and Guardrails

Checks

- Cost is measurable by route, feature, and user segment.

- Routing rules are explicit and testable.

- Fallback/retry policies have caps.

- Cost changes are reviewed alongside quality and latency.

Metrics

- Cost per successful task - More useful than cost per request when retries/failures differ across routes.

- Token usage by component - Separates prompt bloat, retrieval bloat, and output verbosity.

- Cache hit rate and cache value - Shows whether caching reduces spend meaningfully without harming freshness.

- Fallback rate and retry multiplier - Critical for incident-driven cost spikes.

Production Trade-offs

- Cheaper models vs correction cost - A cheaper model that increases human rework may raise total system cost.

- Aggressive caching vs freshness - Caching saves money but can degrade correctness for fast-changing information.

- Token reduction vs robustness - Over-compressing prompts or context can hurt reliability and increase retries.

Example Scenario

A classification pipeline cut model cost by routing easy cases to a smaller model, but human-review costs rose. Tracking cost per successful task showed the net gain was smaller than expected and guided better routing thresholds.

How This Fits Your Existing Content Graph

Use this post to bridge your current strengths in prompting and RAG to newer paths such as evaluation, LLMOps, security, and cost/performance. In practice, readers should move between Prompt Structure Patterns for Production, Output Control with JSON and Schemas, Retrieval Is the Hard Part, and Evaluating RAG Quality depending on where the failure occurs.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- Choosing the Right Model for the Job

- How LLMs Actually Work: Tokens, Context, and Probability

- Output Control with JSON and Schemas

- Why RAG Exists (And When Not to Use It)

- Why AI Demos Scale Poorly Into Real Systems

- The Intelligence Infrastructure Era: 7 Surprising Takeaways from the AI Frontier

- Chunking Is Still the #1 Bottleneck in RAG

Read Next

Continue learning

Next in this path

Latency Budgeting for AI Features (Where the Time Goes and How to Cut It)

A latency budgeting framework for AI features that breaks down where time goes across model, retrieval, and orchestration layers.

Intentional links