Build an Eval Harness for Prompt and RAG Changes (Without Overengineering It)

— Shows how to build a lightweight evaluation harness for prompt and RAG changes so teams can compare revisions without slowing down shipping.

TL;DR

Shows how to build a lightweight evaluation harness for prompt and RAG changes so teams can compare revisions without slowing down shipping.

Prompt and RAG changes are often shipped together, which makes failures hard to attribute. A lightweight harness gives you repeatable comparison runs without building a heavy platform first.

This topic connects directly to your existing foundations and reliability framing, especially How LLMs Actually Work, Why Models Hallucinate, and Why AI Demos Scale Poorly Into Real Systems.

Why This Matters in Production

Most AI failures in production are not caused by one bad model response. They come from system design choices: unclear requirements, weak evaluation, poor observability, missing guardrails, and rollout practices that assume demos predict real usage.

A useful engineering article on this topic should help teams make better decisions under constraints. That means defining scope, measuring outcomes, and making trade-offs explicit instead of relying on intuition alone.

When To Use This Approach

- You regularly change prompts, retrieval settings, chunking, or ranking logic.

- You need side-by-side comparisons between baseline and candidate systems.

- You want a repeatable process that fits into engineering iteration speed.

When Not To Use It (Yet)

- You are still testing single prompts manually and have no stable input set.

- You need a full enterprise evaluation platform immediately (start smaller, then scale).

- You cannot capture model/retrieval configs for reproducible runs.

Common Failure Modes

1. Harness runs prompts only

The comparison ignores retrieval, formatting, and validators, so results do not reflect production behavior.

2. No baseline snapshot

Teams compare against memory instead of a fixed baseline run.

3. No artifact storage

Outputs and judgments are not saved, making regression triage impossible.

4. Too much automation too early

Complex tooling delays the basic discipline of repeatable runs and failure review.

Implementation Workflow

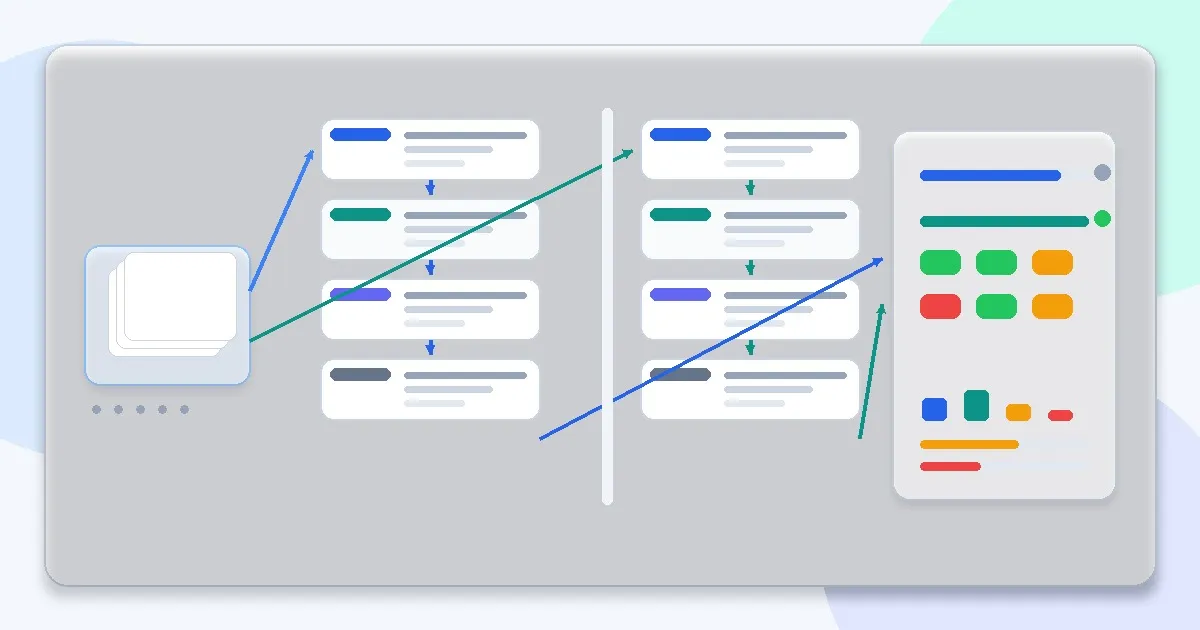

Step 1: Define baseline and candidate configurations

Version the prompt, model, retrieval parameters, and post-processing chain for each run.

Step 2: Load a versioned test set

Use a stable set with bucket metadata so you can segment results.

Step 3: Run both systems through the same pipeline

Execute the real request path, not isolated prompt snippets.

Step 4: Collect outputs and operational metrics

Store response text, structured outputs, latency, cost estimates, and retrieval artifacts where possible.

Step 5: Score with a rubric and validators

Combine automated checks (schema, citations present) with human or rubric-based judgments.

Step 6: Review diffs by bucket

Focus on regressions first, then inspect any improvements for hidden trade-offs.

Metrics, Checks, and Guardrails

Checks

- Baseline and candidate share the same input set and scoring criteria.

- Run artifacts include enough metadata to reproduce results.

- Results are segmented by bucket and failure type.

- Decision notes capture why a candidate was accepted or rejected.

Metrics

- Regression count by bucket - Fastest way to identify whether a change broke critical behavior.

- Win/loss/tie comparison vs baseline - Useful for side-by-side decisions when exact scores are noisy.

- Latency and cost deltas - Prevents quality improvements from hiding operational regressions.

- Groundedness and format compliance - Especially important for prompt + RAG systems with structured outputs.

Production Trade-offs

- Harness simplicity vs feature depth - A simple harness ships faster and teaches the right habits; add dashboards and automation later.

- Automated scoring vs human review - Automation scales; human review catches subtle failure modes. Use automation for gating and human review for diagnosis.

- Batch cadence vs continuous evaluation - Batch runs are easy to manage; continuous runs improve safety but cost more effort and infrastructure.

Example Scenario

A retrieval ranking tweak improved average relevance scores but increased formatting failures due to longer context windows. The harness surfaced the regression in schema compliance before rollout.

How This Fits Your Existing Content Graph

Use this post to bridge your current strengths in prompting and RAG to newer paths such as evaluation, LLMOps, security, and cost/performance. In practice, readers should move between Prompt Structure Patterns for Production, Output Control with JSON and Schemas, Retrieval Is the Hard Part, and Evaluating RAG Quality depending on where the failure occurs.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- Prompt Structure Patterns for Production

- Output Control with JSON and Schemas

- Why RAG Exists (And When Not to Use It)

- Chunking Strategies That Actually Work

- Retrieval Is the Hard Part

- Evaluating RAG Quality: Precision, Recall, and Faithfulness

- Why Most RAG Systems Fail in Production

Read Next

Continue learning

Next in this path

Playbook: Building an Evaluation Pipeline for Prompt + RAG Changes

A step-by-step playbook for building an evaluation pipeline that catches regressions in prompt and RAG changes before production rollout.