Playbook: Building an Evaluation Pipeline for Prompt + RAG Changes

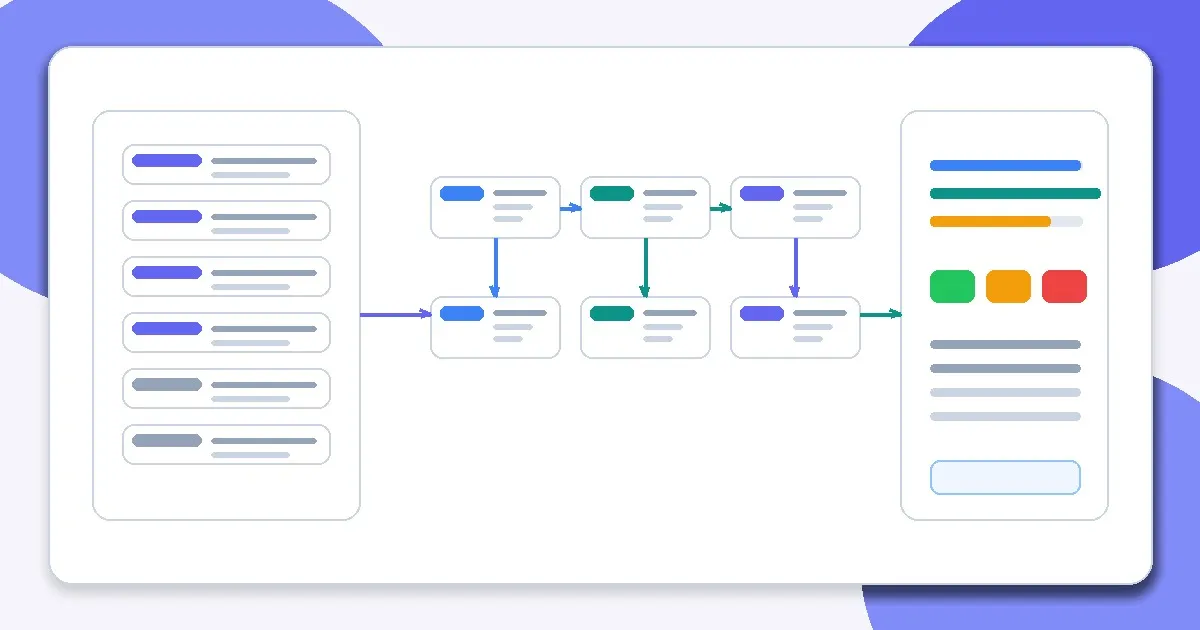

— A step-by-step playbook for building an evaluation pipeline that catches regressions in prompt and RAG changes before production rollout.

TL;DR

A step-by-step playbook for building an evaluation pipeline that catches regressions in prompt and RAG changes before production rollout.

Teams need a repeatable process, not just principles, to catch regressions when prompts and retrieval settings change. This playbook turns the evaluation concepts into an operational routine.

This playbook is designed to be run by a product engineer, ML engineer, or platform owner who needs a repeatable release process for prompt + RAG changes.

Prerequisites and Scope

- A versioned prompt + RAG pipeline (or at least a documented baseline configuration).

- A small test set with buckets and pass/fail or rubric judgments.

- Ability to run baseline and candidate systems side by side.

- A place to store run artifacts (outputs, metrics, config metadata).

Inputs, Outputs, and Success Criteria

- Inputs - Baseline config, candidate config, test set, scoring rubric/validators, run metadata (date, owner, commit/config versions).

- Outputs - Per-case results, aggregated metrics by bucket, regression list, decision summary (ship/iterate/block), and follow-up actions.

- Success criteria - The pipeline reliably surfaces regressions, supports reproducible reruns, and produces decisions the team trusts.

Step-by-Step Implementation Workflow

Step 1: Freeze the comparison scope

Decide exactly what changed (prompt, model, retriever, chunking, ranking, validator) and what remains constant.

Step 2: Select the evaluation set

Choose a smoke set for fast iteration and a deeper regression set for final decisions. Include known failure buckets.

Step 3: Run baseline and candidate through the same pipeline

Capture outputs, structured results, timing, and cost metadata for each case.

Step 4: Score with automated checks plus rubric review

Use schema/constraint checks where possible and human/rubric review for task success and nuanced failures.

Step 5: Segment and compare results

Review win/loss/tie, regressions by bucket, and operational deltas (latency/cost/fallbacks).

Step 6: Make a decision and record mitigations

Accept, reject, or ship behind a gate. Document why and what to monitor after deployment.

Step 7: Feed failures back into the test set

Add new regressions and edge cases so the next run gets stronger.

Validation and Regression Checks

- Re-run a subset to confirm the pipeline is deterministic enough for decision-making.

- Verify baseline and candidate used the intended configs and data snapshots.

- Check that failures are labeled by root cause (retrieval, prompt, model, validator, policy, unknown).

- Confirm cost/latency regressions were reviewed alongside quality metrics.

Operational Runbook

- Alerts - Notify owners when post-deploy validation failure rate, fallback rate, or groundedness indicators breach thresholds after a promoted change.

- Ownership - Assign an owner for the evaluation pipeline, plus a feature owner responsible for launch decisions and follow-up fixes.

- Rollback - Tie rollout rollback criteria to observed regressions (for example schema failures, groundedness drop, or cost spike) and test rollback procedures in advance.

Production Trade-offs

- Evaluation depth vs iteration speed - Use smoke runs for rapid prompt tuning and deeper runs for launch decisions.

- Automation vs reviewer context - Automation scales comparisons, but reviewers still need case context to classify root causes accurately.

- Centralized pipeline vs team-local harnesses - Centralized systems improve consistency; local harnesses improve adoption speed. Start with local discipline and standardize gradually.

Example Rollout Decision

A team changed chunking overlap and prompt instructions in the same release. The playbook separated the comparison, surfaced regressions in citation grounding, and prevented a risky rollout.

Recommended Companion Posts

This playbook works best when readers already understand Evaluating RAG Quality, Debugging Bad Prompts Systematically, and Retrieval Is the Hard Part. It should also link to the news framing in Evaluation Is Becoming the Real AI Differentiator.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- Evaluating RAG Quality: Precision, Recall, and Faithfulness

- Debugging Bad Prompts Systematically

- Output Control with JSON and Schemas

- Retrieval Is the Hard Part

- Chunking Strategies That Actually Work

- Evaluation Is Becoming the Real AI Differentiator

- Why Most RAG Systems Fail in Production

Read Next

Continue learning

Next in this path

Observability for AI Systems: What to Log, Trace, and Alert On

A production-focused observability framework for AI systems covering logs, traces, metrics, alerts, and debugging workflows.

Intentional links