Observability for AI Systems: What to Log, Trace, and Alert On

— A production-focused observability framework for AI systems covering logs, traces, metrics, alerts, and debugging workflows.

TL;DR

A production-focused observability framework for AI systems covering logs, traces, metrics, alerts, and debugging workflows.

AI incidents are often misdiagnosed because teams cannot see which layer failed. Observability makes it possible to separate prompt, retrieval, model, validator, and integration failures under production load.

This topic connects directly to your existing foundations and reliability framing, especially How LLMs Actually Work, Why Models Hallucinate, and Why AI Demos Scale Poorly Into Real Systems.

Why This Matters in Production

Most AI failures in production are not caused by one bad model response. They come from system design choices: unclear requirements, weak evaluation, poor observability, missing guardrails, and rollout practices that assume demos predict real usage.

A useful engineering article on this topic should help teams make better decisions under constraints. That means defining scope, measuring outcomes, and making trade-offs explicit instead of relying on intuition alone.

When To Use This Approach

- You are running an AI feature beyond internal demos.

- You need on-call debugging for user-reported bad answers or workflow failures.

- You want to detect regressions before they become support escalations.

When Not To Use It (Yet)

- You are logging raw sensitive user content without a data-handling policy.

- You plan to observe only model outputs and ignore upstream/downstream dependencies.

- You have no ownership for alert response and expect alerts alone to solve incidents.

Common Failure Modes

1. Logs capture text but not context

Teams store outputs but omit prompt version, model version, retrieval metadata, or validator results.

2. No trace correlation

A single user request crosses retrieval, model, and application layers but cannot be traced end-to-end.

3. Alerts on vanity metrics

Token volume alerts fire often, while groundedness or validation failure spikes go unnoticed.

4. Unsafe logging

Sensitive inputs are stored without redaction or access boundaries, creating a security problem while trying to improve reliability.

Implementation Workflow

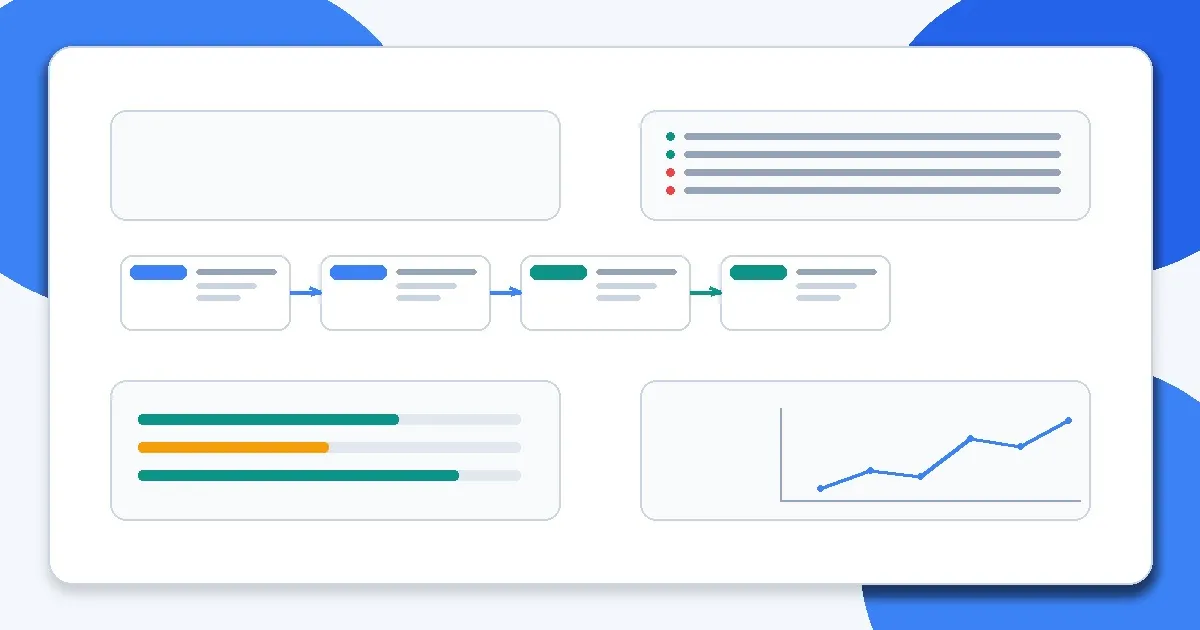

Step 1: Define observability schema

Choose request IDs, version fields, timing fields, and outcome labels used across the AI pipeline.

Step 2: Log structured events at each stage

Capture retrieval counts, selected chunks, model call metadata, validator results, and downstream execution outcomes.

Step 3: Add distributed tracing

Connect user request -> orchestrator -> retrieval -> model -> tool calls -> post-processing for latency breakdowns.

Step 4: Create product and reliability dashboards

Separate business outcome metrics from debugging diagnostics so each audience gets useful visibility.

Step 5: Implement alerting on failure indicators

Alert on validation failures, refusal spikes, latency budget breaches, or groundedness drops, not just request volume.

Step 6: Run incident reviews and update instrumentation

Each incident should improve what you log, tag, and alert on next time.

Metrics, Checks, and Guardrails

Checks

- Every request can be traced across all major pipeline steps.

- Logs include config versions (prompt/model/retrieval) for reproducibility.

- Sensitive content handling rules are documented and enforced.

- Alert thresholds map to an action and an owner.

Metrics

- Request success and task completion - Operational health should align with user outcomes, not only system uptime.

- Validation failure rate - Tracks schema, policy, or post-processing breakage.

- Latency by stage - Needed to identify whether delays come from retrieval, model inference, tool calls, or application logic.

- Fallback rate and refusal rate - Helps detect degraded behavior, drift, or policy changes.

- Cost/request by path - Required when multiple models or fallbacks are used.

Production Trade-offs

- Visibility vs privacy risk - Detailed logs improve debugging but increase data-handling burden. Favor structured metadata and selective sampling.

- Alert sensitivity vs noise - Low thresholds catch incidents earlier but can overwhelm on-call if not tuned by severity and segment.

- Instrumentation depth vs latency overhead - Tracing every step has runtime cost; instrument the critical path first.

Example Scenario

Users report “randomly wrong” answers. Traces show a spike in retrieval timeouts causing fallback responses without citations. The fix is in retrieval reliability, not prompt tuning.

How This Fits Your Existing Content Graph

Use this post to bridge your current strengths in prompting and RAG to newer paths such as evaluation, LLMOps, security, and cost/performance. In practice, readers should move between Prompt Structure Patterns for Production, Output Control with JSON and Schemas, Retrieval Is the Hard Part, and Evaluating RAG Quality depending on where the failure occurs.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- How LLMs Actually Work: Tokens, Context, and Probability

- Why Models Hallucinate (And Why That’s Expected)

- Retrieval Is the Hard Part

- Evaluating RAG Quality: Precision, Recall, and Faithfulness

- Why AI Demos Scale Poorly Into Real Systems

- Why Every Smarter Model Also Increases System Risk

Read Next

Continue learning

Next in this path

Production Rollouts for AI Features (Shadow Mode, Canary, Guardrails, Rollback)

A rollout strategy for AI features using shadow mode, canaries, guardrails, and rollback plans to reduce production risk.

Intentional links