Building a Test Set for LLM Features (Golden Cases, Edge Cases, Failure Buckets)

— A practical guide to constructing reusable LLM test sets with golden cases, edge cases, and failure buckets that support regression testing.

TL;DR

A practical guide to constructing reusable LLM test sets with golden cases, edge cases, and failure buckets that support regression testing.

Without a versioned test set, each “evaluation” run becomes a different conversation and results cannot be compared. A good test set is the foundation of regression control.

This topic connects directly to your existing foundations and reliability framing, especially How LLMs Actually Work, Why Models Hallucinate, and Why AI Demos Scale Poorly Into Real Systems.

Why This Matters in Production

Most AI failures in production are not caused by one bad model response. They come from system design choices: unclear requirements, weak evaluation, poor observability, missing guardrails, and rollout practices that assume demos predict real usage.

A useful engineering article on this topic should help teams make better decisions under constraints. That means defining scope, measuring outcomes, and making trade-offs explicit instead of relying on intuition alone.

When To Use This Approach

- You are preparing to compare prompt, model, or retrieval changes.

- You have recurring failures and need a reusable set of examples to prevent regressions.

- You want to shift review conversations from anecdotes to evidence.

When Not To Use It (Yet)

- You have no stable product behavior to test yet.

- You are trying to cover every possible query before collecting real usage patterns.

- You treat the test set as static and never update it after incidents.

Common Failure Modes

1. Only happy-path examples

The system looks great until users submit ambiguous, adversarial, or incomplete inputs.

2. Expected outputs are over-specified

Teams require exact wording instead of rubric-based success criteria, causing false failures.

3. No failure buckets

Known bad behaviors are not grouped, so regressions are rediscovered repeatedly.

4. No versioning

Examples change silently, and metric shifts cannot be explained.

Implementation Workflow

Step 1: Define test set purpose

Decide whether the set supports launch gating, regression tests, or deep diagnosis. The structure differs for each.

Step 2: Collect examples from real usage and planned use cases

Combine product requirements, support tickets, and observed failures where possible.

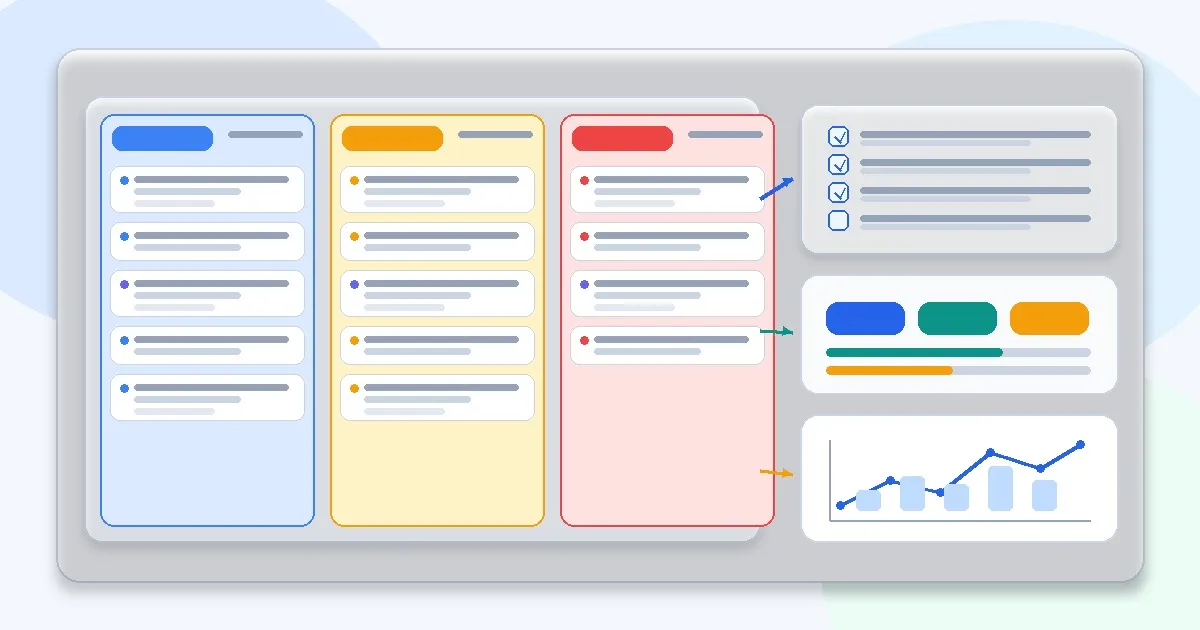

Step 3: Create buckets

Use categories like happy path, edge case, ambiguous input, policy boundary, malformed input, and known regression.

Step 4: Write expected judgments

Prefer rubrics and constraints over exact string matches unless exact output is required.

Step 5: Store metadata

Record source, bucket, severity, and any notes about why the case matters.

Step 6: Review and prune

Remove duplicates and low-signal cases so the set stays maintainable.

Metrics, Checks, and Guardrails

Checks

- Test cases cover both expected behavior and historical failures.

- Each case has a clear pass/fail rubric or judgment rule.

- Buckets can be filtered for targeted debugging.

- The team can add new incidents to the set within one workflow.

Metrics

- Coverage by bucket - Shows where you are under-testing and where you may be overweighting easy examples.

- Pass rate by severity - High-severity failures should have stronger weighting than minor polish issues.

- Regression recurrence - Tracks whether the same failure class keeps reappearing after fixes.

Production Trade-offs

- Large test set vs review time - More cases improve coverage but can slow iteration. Keep a smoke set and a deeper regression set.

- Exact answers vs rubric judgments - Exact matches are easy to automate but fragile; rubrics are robust but need reviewer discipline.

- Synthetic examples vs production examples - Synthetic examples help coverage; production examples improve realism. Use both.

Example Scenario

A summarization feature passed internal tests, but users pasted messy tables and email threads. After adding malformed and mixed-format inputs to the test set, regressions became visible before release.

How This Fits Your Existing Content Graph

Use this post to bridge your current strengths in prompting and RAG to newer paths such as evaluation, LLMOps, security, and cost/performance. In practice, readers should move between Prompt Structure Patterns for Production, Output Control with JSON and Schemas, Retrieval Is the Hard Part, and Evaluating RAG Quality depending on where the failure occurs.

Related Context From This Site

These links are directly relevant to this topic and help connect it to your existing foundations, prompting, RAG, and news coverage.

- Debugging Bad Prompts Systematically

- Prompt Anti-patterns Engineers Fall Into

- Evaluating RAG Quality: Precision, Recall, and Faithfulness

- Why Models Hallucinate (And Why That’s Expected)

- Why Prompt Improvements Plateau Faster Than You Expect

Read Next

Continue learning

Next in this path

Build an Eval Harness for Prompt and RAG Changes (Without Overengineering It)

Shows how to build a lightweight evaluation harness for prompt and RAG changes so teams can compare revisions without slowing down shipping.